Microsoft is making strides in strengthening its computing infrastructure offerings with the introduction of two new in-house chips for enterprises. Azure Maia 100 and Azure Cobalt 100 were introduced at the Microsoft Ignite 2023 conference, the tech giant’s largest annual global event held in Seattle. These new chips aim to provide efficient, scalable, and sustainable compute capabilities for enterprises to leverage the latest advancements in cloud and AI technologies.

Azure Maia 100: AI Accelerator for Cloud-based Workloads

The first chip, Azure Maia 100, serves as an AI accelerator, specifically designed to handle cloud-based training and inference for generative AI workloads. Microsoft has optimized the chip to achieve maximum hardware utilization when processing large-scale AI workloads running on Microsoft Azure. The development of Maia involved collaboration with OpenAI, leveraging their expertise and incorporating feedback from testing with their generative AI models.

“Azure’s end-to-end AI architecture, now optimized down to the silicon with Maia, paves the way for training more capable models and making those models cheaper for our customers.”

– Sam Altman, CEO of OpenAI

Azure Cobalt 100: Energy-efficient General-purpose Workloads

The second chip, Azure Cobalt 100, is an Arm-based chip designed to handle general-purpose workloads with high efficiency. Its architecture is optimized for maximizing performance per watt, ensuring that the data center receives more computing power for each unit of energy consumed. Microsoft aims to balance performance and energy efficiency in its data centers to enhance the overall operational efficiency.

“The architecture and implementation are designed with power efficiency in mind. We’re making the most efficient use of the transistors on the silicon. Multiply those efficiency gains in servers across all our data centers, it adds up to a pretty big number.”

– Wes McCullough, Corporate Vice President of Hardware Product Development at Microsoft

Both Maia and Cobalt chips will be deployed in Azure next year, initially in Microsoft’s own data centers to power services like Copilot and Azure OpenAI. The introduction of these chips is the final piece of the puzzle in Microsoft’s mission to deliver flexible infrastructure systems that can be optimized for different workload requirements. By incorporating their own hardware and software solutions alongside partner-delivered offerings, Microsoft aims to provide customers with infrastructure choice.

As part of the infrastructure enhancement, Microsoft is also expanding its support for partner hardware. The company has launched a preview of the new NC H100 v5 virtual machine series, featuring Nvidia H100 Tensor Core GPUs. Additionally, Microsoft plans to add the latest Nvidia H200 tensor core GPUs and AMD MI300X accelerated VMs to Azure, further boosting performance for AI workloads and giving customers more options to align with their specific needs.

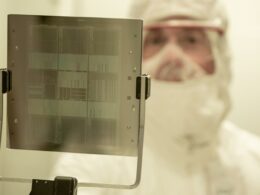

With the introduction of these new chips, Microsoft is reimagining every aspect of its data centers to meet the evolving demands of customers. The chips, designed in-house, will be installed on custom server boards and placed within tailor-made racks that easily integrate into existing company data centers. The company has also developed innovative cooling solutions to ensure optimal performance even in power-intensive situations.

Planning for the future, Microsoft has already commenced the development of the second generation of these chips. This continual advancement in computing infrastructure highlights the company’s commitment to enabling cutting-edge technologies and providing flexible solutions to its customers.