New Software Tool Allows Artists to “Poison” AI Models

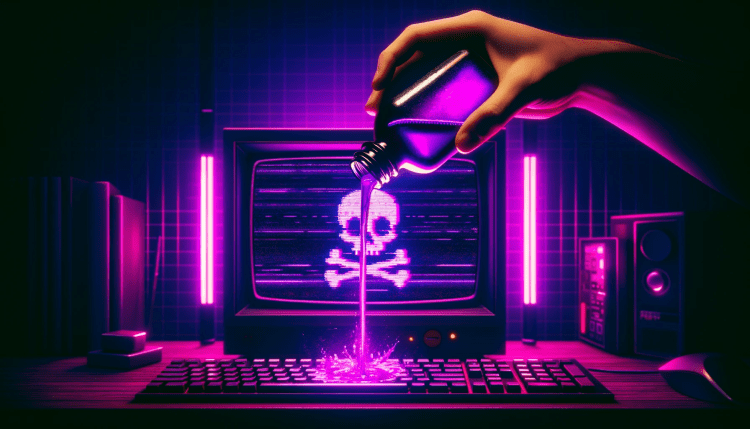

Months after its initial announcement, Nightshade, a new software tool developed by computer scientists at the University of Chicago, is now available for download. Nightshade enables artists to “poison” AI models that use their artworks for training. The tool, developed under Professor Ben Zhao’s Glaze Project, utilizes the PyTorch machine learning framework to identify images and apply subtle alterations at the pixel level.

A Tool to Turn AI Against AI

The Glaze/Nightshade team previously released Glaze, a defensive tool designed to confuse AI training algorithms by altering digital artwork. In contrast, Nightshade is considered an offensive tool. When an AI model is trained on images “shaded” with Nightshade, it may incorrectly categorize objects in the future. For example, an AI model may see a large leather purse instead of a shaded image of a cow in a green field, as perceived by human eyes. This misclassification can have significant consequences.

Transforming Images Into “Poison” Samples

Nightshade v1.0 transforms images into “poison” samples using open-source AI libraries. These samples, when used to train AI models, lead to unpredictable behaviors that deviate from expected norms. The altered images contain different subjects visible to AI models but appear similar to the human eye. Nightshade’s poisoning effects remain resilient even when images are cropped, resampled, compressed, or have noise added.

Artists interested in using Nightshade must have a Mac with Apple chips (M1, M2, or M3) or a PC running Windows 10 or 11. The tool can be downloaded for both operating systems. However, users must agree to the Glaze/Nightshade team’s end-user license agreement (EULA) and use the tool responsibly.

Since its release, some artists, such as Kelly McKernan, have started utilizing Nightshade to protect their artworks. However, there have been concerns raised by web users who see Nightshade as a cyberattack on AI models and companies. The Glaze/Nightshade team clarifies that their goal is not to break models but to increase the cost of training on unlicensed data, encouraging developers to license images from the creators.

The Controversy Surrounding Data Scraping

The development of Nightshade stems from issues related to data scraping, where AI image generators scrape the internet for training data, including original artworks without the creators’ consent. Artists argue that this practice threatens their livelihoods by allowing AI models to compete with or replace their work. While AI companies defend data scraping as lawful under “fair use,” artists’ objections and opt-out code implementations have raised concerns. The Glaze/Nightshade team believes that opt-out lists can be easily ignored and unenforceable.

Nightshade was created to address the power asymmetry between AI model trainers and artists. By deterring model trainers from disregarding copyrights and scraping unauthorized data, Nightshade aims to make widespread data scraping more costly for AI model makers. The team hopes this will prompt trainers to consider licensing agreements with artists as a more viable alternative.

It is important to note that Nightshade cannot reverse the impact of previously scraped artworks on AI model training. However, shading these images now may affect the efficacy of future models if they are re-scraped and used for updated versions.

While Nightshade has the potential for misuse, its creators emphasize responsible use and adherence to licensing agreements to maintain ethical practices.