The industry shift towards deploying smaller, more specialized — and therefore more efficient — AI models mirrors a transformation we’ve previously witnessed in the hardware world. This trend can be compared to the adoption of graphics processing units (GPUs), tensor processing units (TPUs), and other hardware accelerators, which have significantly improved computing efficiency.

The Physics behind Specialized AI Models

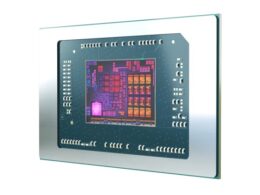

There’s a simple explanation for both cases, and it comes down to physics. Central processing units (CPUs) were originally designed as general computing engines to perform a wide range of tasks. However, this generality comes at a cost.

“This trade-off, while offering versatility, inherently reduces efficiency,” explains Luis Ceze, CEO of OctoML.

General-purpose CPUs require more silicon for circuitry, more energy to power them, and more time to execute operations. As a result, specialized computing has become increasingly popular over the past 10-15 years.

Luis Ceze continues, “Today you can’t have a conversation about AI without seeing mentions of GPUs, TPUs, NPUs, and various forms of AI hardware engines. These specialized engines are less generalized, meaning they do fewer tasks than a CPU, but they are much more efficient.”

Thanks to their simplicity and efficiency, systems can incorporate multiple specialized compute engines, allowing for increased operations per unit of time and energy.

The Evolution of Large Language Models

A parallel evolution is currently unfolding in the realm of large language models (LLMs). While general models like GPT-4 are impressive in their ability to perform complex tasks, the cost of their generality is significant.

Luis Ceze notes, “This has given rise to specialized models like CodeLlama for coding tasks and Llama-2-7B for typical language manipulation tasks. These smaller models offer comparable or even better accuracy at a much lower cost.”

Similar to the hardware world, where GPUs excel in parallel processing of simpler operations, the future of LLMs lies in deploying a multitude of simpler models for most AI tasks. The larger, more resource-intensive models will be reserved for tasks that truly require their capabilities.

Fortunately, many enterprise applications, such as unstructured data manipulation, text classification, and summarization, can all be accomplished with smaller, more specialized models.

Luis Ceze emphasizes, “The underlying principle is straightforward: Simpler operations demand fewer electrons, translating to greater energy efficiency. The future of AI lies in embracing specialization for sustainable, scalable, and efficient AI solutions.”

“The future of AI, therefore, hinges not on building ever-larger general models but on embracing the power of specialization for sustainable, scalable, and efficient AI solutions.” – Luis Ceze