The National Institute of Standards and Technology (NIST) has recently released a crucial report titled “Adversarial Machine Learning: A Taxonomy and Terminology of Attacks and Mitigations” to provide guidance in safeguarding artificial intelligence (AI) systems against an increasingly hostile threat landscape. In today’s world, where AI systems are becoming more powerful yet more vulnerable, it is imperative to understand and combat the techniques employed by attackers to deceive and manipulate these systems.

The Cunning Techniques of Adversarial Machine Learning

The report offers a structured overview of adversarial attacks on AI systems, organizing them based on attackers’ objectives, abilities, and knowledge of the target system. Adversaries have the ability to intentionally confuse or “poison” AI systems, causing them to malfunction. Exploiting vulnerabilities in AI system development and deployment, attackers may engage in tactics such as “data poisoning,” manipulating training data to mislead AI models on a large scale.

“Recent work shows that poisoning could be orchestrated at scale so that an adversary with limited financial resources can control a fraction of public datasets used for model training,”

Another concerning attack method outlined in the report is “backdoor attacks,” where disguised triggers are embedded in training data to cause specific misclassifications later on. Defending against these types of attacks proves to be notoriously challenging.

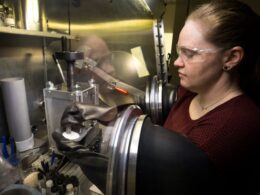

The NIST report also addresses privacy risks associated with AI systems. Techniques like “membership inference attacks” can determine if a particular data sample was used for training an AI model. The report aptly points out that currently, there is no foolproof method for protecting AI from deception.

The Call for Caution and Vigilance

While the potential of AI to revolutionize various industries is undeniable, experts stress the importance of proceeding with caution. The NIST report cautions that, although AI chatbots hold significant promise, their deployment should be approached with an abundance of caution due to the emerging nature of the technology.

Ultimately, the goal of the NIST report is to establish a common language and understanding surrounding AI security concerns. It is expected to serve as a vital reference for the AI security community as it grapples with emerging threats.

“This is the best AI security publication I’ve seen. What’s most noteworthy are the depth and coverage. It’s the most in-depth content about adversarial attacks on AI systems that I’ve encountered.” – Joseph Thacker, Principal AI Engineer and Security Researcher at AppOmni

As we navigate this unending game of cat and mouse, it becomes evident that AI systems require robust protection before widespread deployment across industries. Ignoring the risks associated with adversarial attacks would be a perilous oversight.